The Truth Curve: How to Choose the Right MVP Fidelity for Your Idea

The term MVP — Minimum Viable Product — has been so thoroughly overused in product development conversations that it has nearly lost its meaning. In Eric Ries's original formulation in the Lean Startup, an MVP was the smallest possible experiment that would generate learning about the most critical assumption underlying a business idea. In practice, the term has migrated to mean 'the first version of a feature we ship' — essentially a small feature release dressed up in validation language. This dilution matters because it costs teams money and time: when you call your first production release an MVP, you have likely already over-invested in fidelity by several steps.

The Truth Curve, a concept from Giff Constable's work on product experimentation and discussed in Jeff Gothelf's Lean UX practice, provides a framework for choosing the right level of experiment fidelity for the evidence you currently have. The core principle is simple: the more uncertainty you have about an idea, the lower the fidelity of experiment you need. High uncertainty deserves low-cost experiments. Only after you have reduced uncertainty through earlier experiments is it appropriate to invest in higher-fidelity tests. Teams that violate this principle — by building high-fidelity prototypes or full features for ideas they have not yet validated at a lower level — are systematically over-investing in the wrong places.

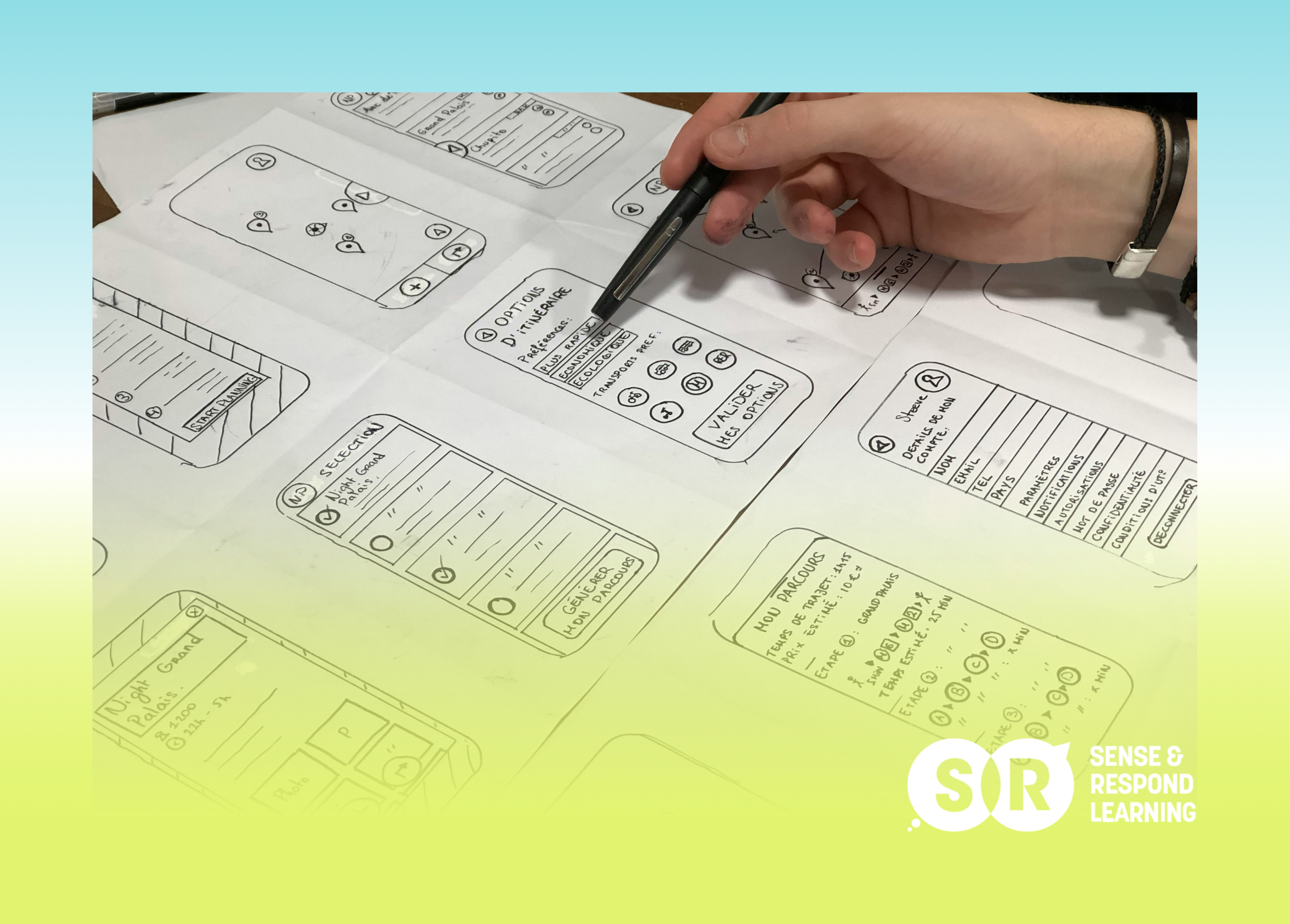

Low-fidelity experiments generate learning quickly and cheaply before you invest in building

Understanding the Truth Curve

The Truth Curve maps experiment fidelity on one axis against the evidence already in hand on the other. When you have very little evidence — when an idea is new and most of your assumptions are untested — the appropriate experiment is low-fidelity and low-cost: a conversation with a few customers, a paper sketch, a clickable mockup built in a day. These experiments generate learning quickly and cheaply, letting the team refine or discard ideas before any significant investment. As evidence accumulates and the team becomes more confident in the core assumptions, the appropriate level of fidelity increases: clickable prototypes, then functional prototypes, then limited-release code, then full production deployment.

The insight embedded in the Truth Curve is that each step up in fidelity should be justified by the evidence from the step before. You do not build a functional prototype until your clickable prototype has validated the core interaction model. You do not run a full A/B test until your functional prototype has validated the core value proposition. This sequential validation prevents what is perhaps the most common waste in product development: investing heavily in a solution before the problem it solves has been confirmed as real and significant enough to justify that investment. The Truth Curve is not a rigid checklist but a diagnostic tool. It asks: given what we know, are we investing at the right level of fidelity for this idea?

Low-Fidelity Experiments: Where Ideas Are Tested Cheaply

Low-fidelity experiments are the most underused tool in the product team's arsenal. They include customer conversations (not surveys — direct, structured conversations), paper prototypes, landing page tests, and the 'Feature Fake' techniques like buttons to nowhere. Their defining characteristic is that they can be created and run within hours or days rather than weeks. A five-user interview study run in a single afternoon can invalidate a major assumption that would have taken a team six weeks to build into a feature. The cost asymmetry between these two approaches — hours of research vs. weeks of engineering — is so extreme that the only rational justification for skipping low-fidelity validation is time pressure so severe that it overrides the risk of wasted investment.

One barrier to low-fidelity experimentation is the 'it's not real' objection: 'Users can't give us useful feedback on something this rough.' This objection is almost always wrong. Users do not need to interact with a polished, functional prototype to tell you whether the underlying idea is useful. What they need is enough fidelity to understand what the thing would do for them. A paper sketch with a brief verbal explanation can be sufficient to validate whether a user finds a concept valuable. The key is testing the right assumption at each fidelity level: a paper sketch tests concept viability, not usability. Confusing what you can learn at each level of fidelity is one of the most common sources of wasted research effort.

Each step up in fidelity should be justified by positive evidence from the step before.

When to Increase Fidelity

The signal to increase fidelity is accumulated positive evidence from lower-fidelity experiments. When multiple customer conversations confirm that the underlying problem is real and significant. When a landing page experiment produces a strong conversion rate. When a clickable prototype test shows that users navigate the core workflow successfully and find it valuable. At each of these points, the team has reduced uncertainty enough to justify the greater investment of the next fidelity level. The key discipline is making this decision consciously — explicitly asking 'What did we learn from the last round of experiments, and is it sufficient to justify moving to the next level of investment?' — rather than defaulting to the next stage because it is the next step in the process.

There are also cases where skipping fidelity levels is genuinely appropriate. If you have significant prior evidence from comparable features or products, you may be able to start at a higher fidelity level. If the cost of a fidelity level is negligible — if you can build a functional prototype in the same time it would take to build a clickable mockup — then the distinction between levels may not matter in practice. The Truth Curve is a framework for thinking, not a rigid procedure. The goal is to match investment to evidence. How you achieve that match depends on your team's capabilities, the nature of the idea, and the evidence already available.

Applying the Truth Curve to Your Current Backlog

A practical way to apply the Truth Curve to your existing backlog is to sort your upcoming features by the number of unvalidated assumptions each contains. Features with many unvalidated assumptions — new concepts, unexplored user segments, novel interaction patterns — should be preceded by low-fidelity experiments before they enter the delivery track. Features with few unvalidated assumptions — iterative improvements on well-understood user workflows — can potentially move directly to development with minimal prior validation. This sorting exercise often reveals that teams are investing engineering effort in features that are riskier than they appear, and under-investing in discovery work for those features.

The most common application of the Truth Curve is the product discovery session: a regular, dedicated meeting where the team reviews upcoming features, maps their current evidence level against the Truth Curve, and designs the appropriate next experiment for each. This session does not need to be long — 30 to 45 minutes per week, with the team's PM and lead designer, is sufficient for most teams. It creates a shared language around evidence levels and experiment types, and it prevents the gradual drift toward 'we are confident enough' thinking that leads teams to skip validation steps. Discipline in applying the Truth Curve is the difference between a team that validates assumptions and a team that merely claims to.

The Bottom Line

Every product idea starts its life with a set of assumptions that may or may not be true. The Truth Curve is a map for navigating those assumptions as efficiently as possible: test cheaply when uncertainty is high, invest more as evidence accumulates. Teams that internalize this principle stop asking 'When can we start building?' and start asking 'What do we need to learn before we build?' That shift in framing — from delivery urgency to evidence-based investment — is one of the most reliable indicators of a team that will consistently ship things that create real value rather than things that merely shipped on time.

Related Posts from Sense & Respond Learning

The 'Feature Fake': Testing Demand Without Wasting Engineering Time

The 'Wizard of Oz' MVP: Simulating AI and Automation Manually

Writing Better User Stories: Why You Need 'Hypothesis Statements' Instead

Facilitating a 'Design Studio': Getting Your Whole Team to Sketch Solutions

Further Reading & External Resources

Lean UX (3rd Edition) — Jeff Gothelf & Josh Seiden — The MVP and experimentation framework that underpins the Truth Curve approach

Talking to Humans — Giff Constable — Giff Constable's practical guide to customer discovery research

Running Lean — Ash Maurya — A systematic approach to iterating from Plan A to a plan that works

Want to go deeper? This post is part of the Sense & Respond Learning resource library — practical frameworks for product managers, transformation leads and executives who want to lead with outcomes, not outputs.

Explore the full library at https://www.senseandrespond.co/blog